Dynamic Environment Mapping for Augmented Reality Applications on Mobile Devices

21st September 2018

Abstract

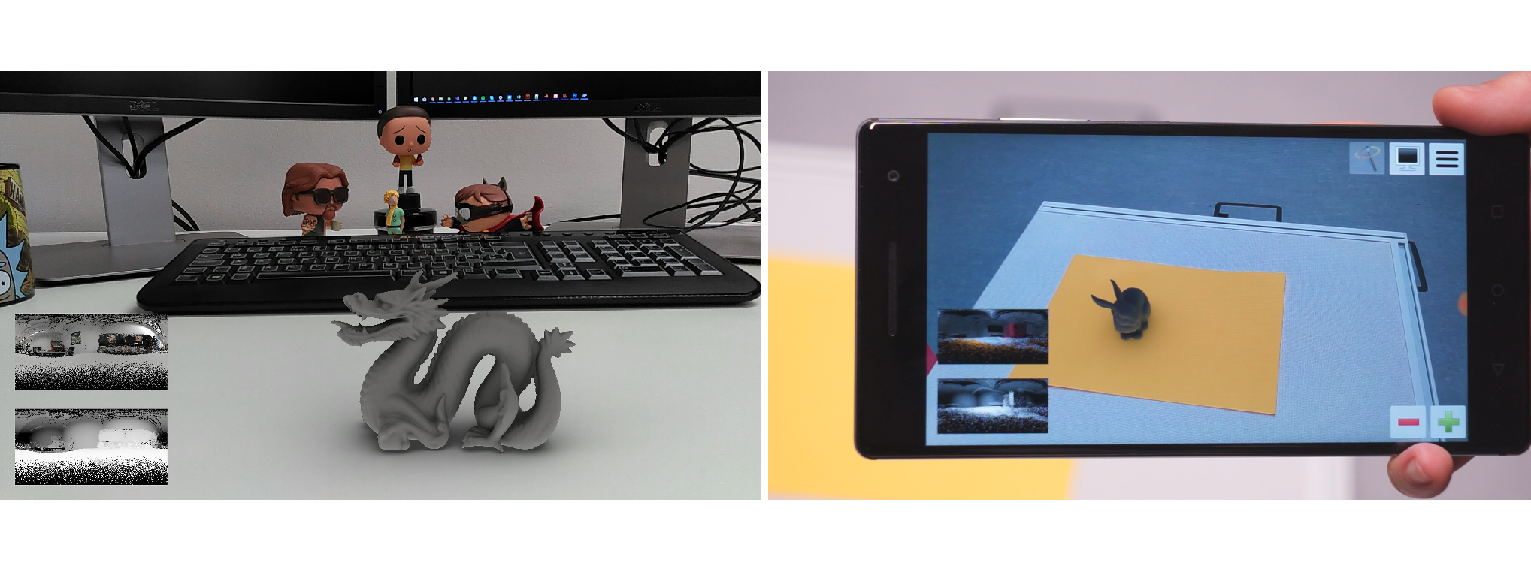

Augmented Reality is a topic of foremost interest nowadays. Its main goal is to seamlessly blend virtual content in real-world scenes. Due to the lack of computational power in mobile devices, rendering a virtual object with high-quality, coherent appearance and in real-time, remains an area of active research. In this work, we present a novel pipeline that allows for coupled environment acquisition and virtual object rendering on a mobile device equipped with a depth sensor. While keeping human interaction to a minimum, our system can scan a real scene and project it onto a two-dimensional environment map containing RGB+Depth data. Furthermore, we define a set of criteria that allows for an adaptive update of the environment map to account for dynamic changes in the scene. Then, under the assumption of diffuse surfaces and distant illumination, our method exploits an analytic expression for the irradiance in terms of spherical harmonic coefficients, which leads to a very efficient rendering algorithm. We show that all the processes in our pipeline can be executed while maintaining an average frame rate of 31Hz on a mobile device.