SAUCE H2020 Crowd Scene Synthesis

18th February 2021SAUCE was a three-year EU Research and Innovation project between Universitat Pompeu Fabra, Foundry, DNEG, Brno University of Technology, Filmakademie Baden-Württemberg, Saarland University, Trinity College Dublin, Disney Research to create a step-change in allowing creative industry companies to re-use existing digital assets for future productions. The project ran from January 1st 2018 to 31st December 2020

The goal of SAUCE was to produce, pilot and demonstrate a set of professional tools and techniques that reduce the costs for the production of enhanced digital content for the creative industries by increasing the potential for re-purposing and re-use of content as well as providing significantly improved technologies for digital content production and management.

V-SENSE was specifically involved in crowd simulation for semantic animation, the development of light field technologies and the development of tools to automatically create skeletons from human character meshes.

Crowd Simulation for Semantic Animation

The SAUCE project proposed to investigate the use of semantic data related to the scene to drive crowd animation. TCD investigated this topic from multiple perspectives, which culminated in a Unity-based toolset that has been publicly released on Github.

We first developed a classifier capable of taking a motion capture file in the biovision (.bvh) format and returning the associated class (). The code has been released on github . An associated search interface was developed to allow for the efficient search and batch download of animations to provide diverse behaviour to crowd members. More info on this research can be found here. The following video offers a summary of how the classifier can be used in practice:

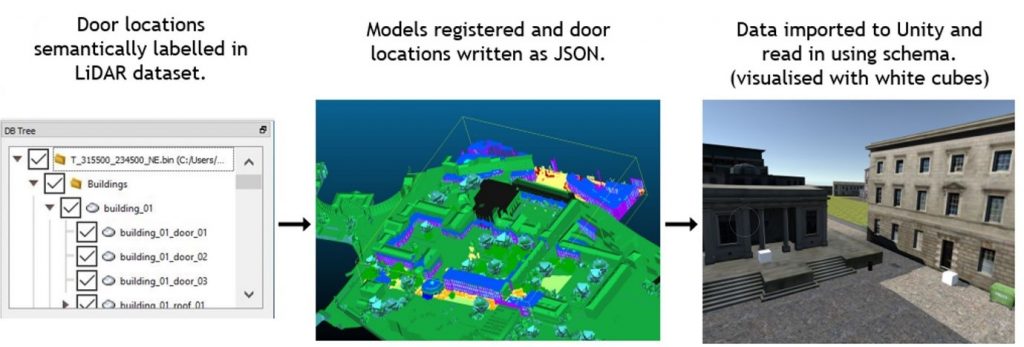

Subsequently, TCD released a dense semantically annotated LiDAR point cloud, with an ontology providing labels that can be used to determine crowd behaviour. Details related to this work can be found here. A simple use case of using this semantically annotated information is demonstrated in the image below, where door locations are used as “spawn points” for crowd members

We also released a Unity-based toolset which uses semantic information in the virtual Unity environment to define a crowd’s behaviour. This toolset is mainly comprised of

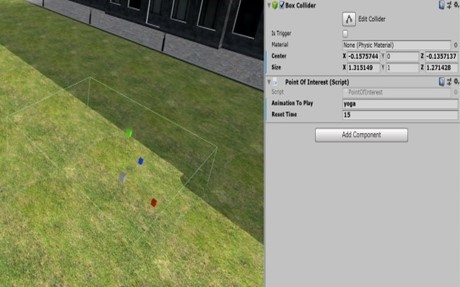

- The design of a system to semantically annotate a virtual environment, with a corresponding API to parse semantic labels into C# objects to be used directly with navigation and behaviour modules.

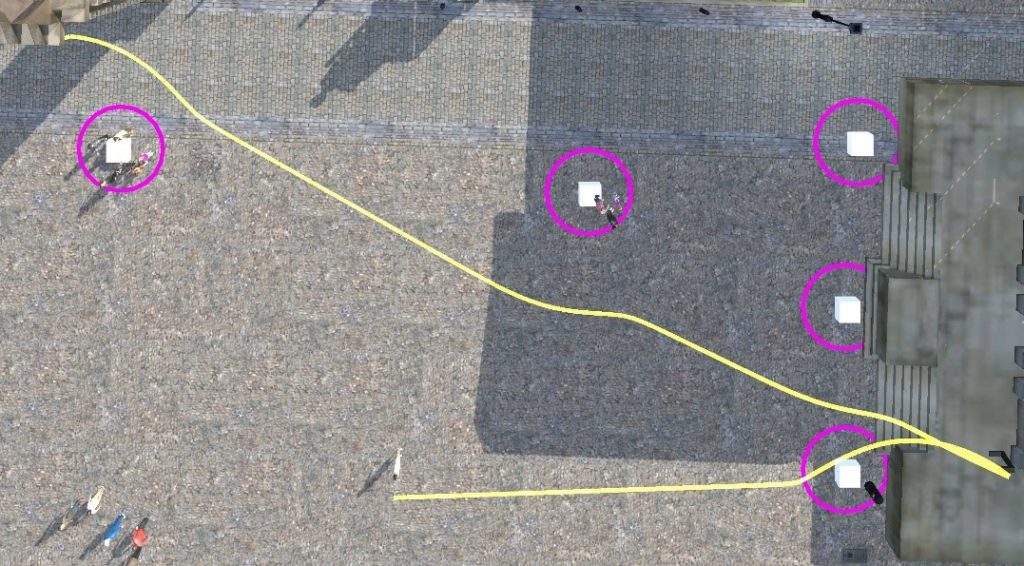

- The implementation of potential field and behaviour trigger modules which depend on environmental semantics and a path compression algorithm which preserves trajectories.

Examples of the resulting paths and triggered behaviours can be seen in the images below:

The following videos demonstrate the crowd simulation system being used within Unity.

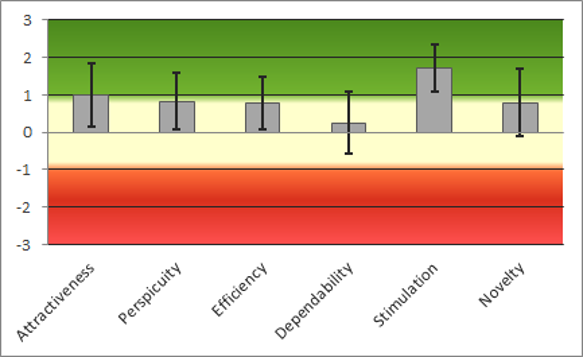

We carried out a user study to evaluate how users react to our crowd system from a Human-Computer Interaction (HCI) perspective. Since crowd simulation systems are typically used as part of a wider application, it’s important that these systems are usable by non-experts in the field. Our study aimed to evaluate this using standard metrics, in addition to measuring the effect that the use of semantic data has in re-targeting a crowd from one domain to a semantically similar domain. An in-depth description of how the study was set up and the aggregated results can be found at the following link: (link to come). The main results are summarised in the graph below, where 6 key usability metrics are shown. A discussion of the results can be found here in Deliverable 8.5.

A detailed and complete report of the work can be found on the SAUCE website, with the Semantic Animation work being summarised in the following documents:

Implementation:

- D6.8 Crowd scene synthesis and metrics for quality evaluation (https://www.sauceproject.eu/dyn/1602089826900/D6.8.pdf)

- D6.3 Working framework to handle relationship contexts between scene and people: (https://www.sauceproject.eu/dyn/1602089826855/D6.3.pdf),

Assessment:

- D8.4 Report on Experimental Production Scenario Results (https://www.sauceproject.eu/dyn/1610534618017/D8.4.pdf)

- D8.5 Combined Evaluation Report (https://www.sauceproject.eu/dyn/1610534760525/D8.5.pdf)

Publications related to this work are as follows:

- David L. Smyth, Gareth W. Young, Jan Ondrej, Rogerio da Silva, Alan Cummins, Susheel Nath, Amar Zia Arslaan, Pisut Wisessing, and Aljosa Smolic, Semantic Crowd Re-targeting: Implementation for Real-time Applications and User Evaluations, 2021 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW), Lisbon, 2021.

- da Silva, Rogerio E; Ondrej, Jan; Smolic, Aljosa, Using LSTM for Automatic Classification of Human Motion Capture Data,14th International Conference on Computer Graphics Theory and Applications 2019.

- S M Iman Zolanvari, Susana Ruano, Aakanksha Rana, Alan Cummins, Rogerio Eduardo da Silva, Morteza Rahbar, Aljosa Smolic. 2019 DublinCity: Annotated LiDAR Point Cloud and its Applications. 30th BMVC, September 2019.

This project has received funding from the European Union’s Horizon 2020 research and innovation programme under grant agreement No. 780470