Using LSTM for Automatic Classification of Human Motion Capture Data

17th May 2019

Creative studios tend to produce an overwhelming amount of content everyday and being able to manage these

data and reuse it in new productions represent a way for reducing costs and increasing productivity and profit.

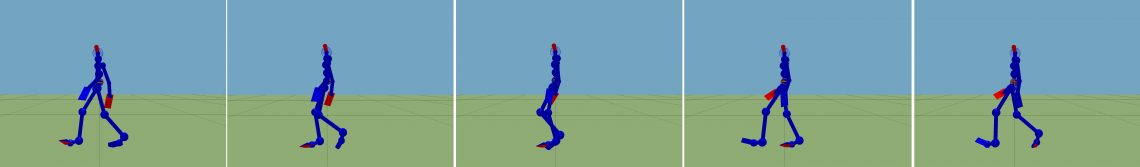

This work is part of a project aiming to develop reusable assets in creative productions. This paper describes

our first attempt using deep learning to classify human motion from motion capture files. It relies on a long

short-term memory network (LSTM) trained to recognize action on a simplified ontology of basic actions like

walking, running or jumping. Our solution was able of recognizing several actions with an accuracy over 95%

in the best cases.

Using LSTM for Automatic Classification of Human Motion Capture Data

14th International Conference on Computer Graphics Theory and Applications 2019.