Spatio-Temporal Upsampling for Free Viewpoint Video Point Clouds

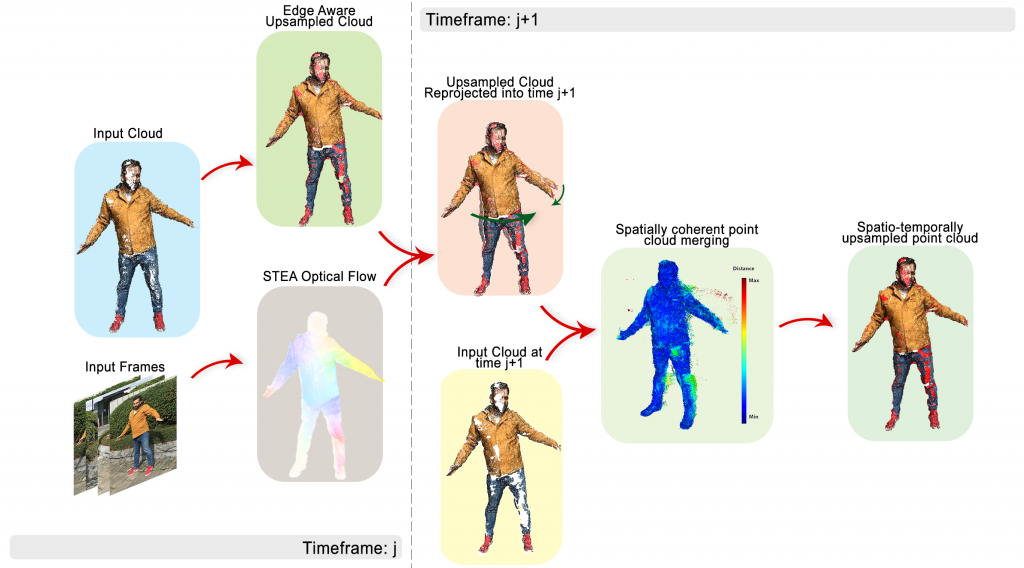

17th May 2019This paper presents an approach to upsampling point cloud sequences captured through a wide baseline camera setup in a spatio-temporally consistent manner. The system uses edge-aware scene flow to understand the movement of 3D points across a free-viewpoint video scene to impose temporal consistency. In addition to geometric upsampling, a Hausdorff distance quality metric is used to filter noise and further improve the density of each point cloud. Results show that the system produces temporally consistent point clouds, not only reducing errors and noise but also recovering details that were lost in frame-by-frame dense point cloud reconstruction. The system has been successfully tested in sequences that have been captured via both static or handheld cameras.

2020: A Self-regulating Spatio-Temporal Filter for Volumetric Video Point Clouds

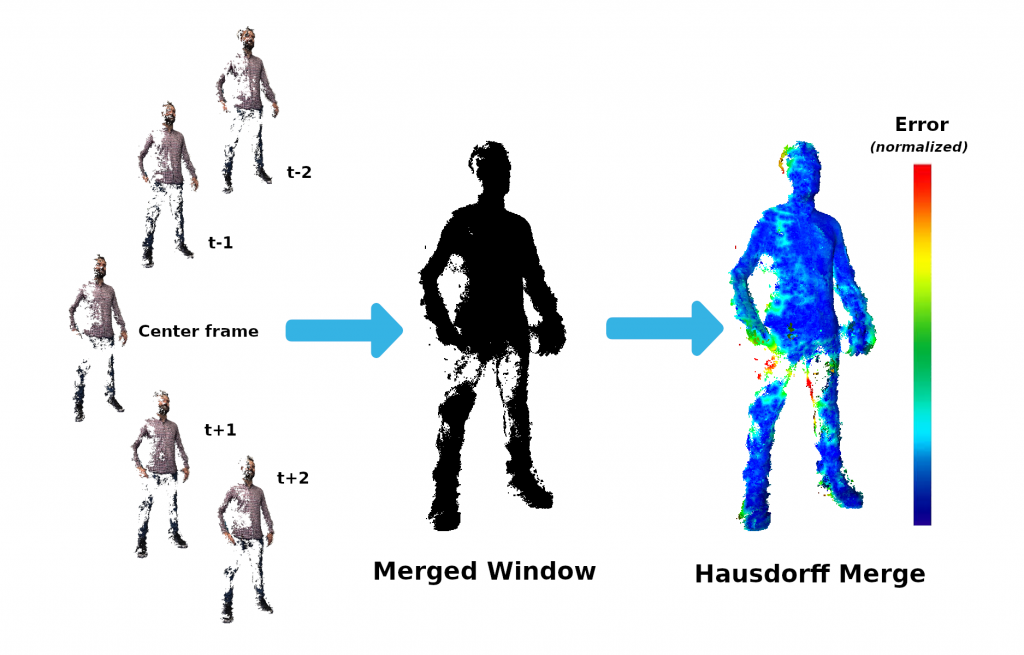

The following work presents a self-regulating filter that is capable of performing accurate upsampling of dynamic point cloud data sequences captured using wide-baseline multi-view camera setups. This is achieved by using two-way temporal projection of edge-aware upsampled point clouds while imposing coherence and noise filtering via a windowed, self-regulating noise filter. We use a state of the art Spatio-Temporal Edge-Aware scene flow estimation to accurately model the motion of points across a sequence and then, leveraging the spatio-temporal inconsistency of unstructured noise, we perform a weighted Hausdorff distance-based noise filter over a given window. Our results demonstrate that this approach produces temporally coherent, upsampled point clouds while mitigating both additive and unstructured noise. In addition to filtering noise, the algorithm is able to greatly reduce intermittent loss of pertinent geometry. The system performs well in dynamic real world scenarios with both stationary and non-stationary cameras as well as synthetically rendered environments for baseline study.